I use AI for reflection. At the end of a long day, when I have a thought I haven’t fully processed yet, I’ll often open a conversation and start typing.

It helps me externalise and organise my messy thoughts into something more coherent. For that first layer of thinking, the messy, unformed kind, it works really well in terms of helping me make sense of it faster.

So this isn’t a piece about AI being bad for reflection. It’s about something more specific, which I’ve been watching in my own practice, my own reflections and in the research that’s just started to put numbers around what I was noticing.

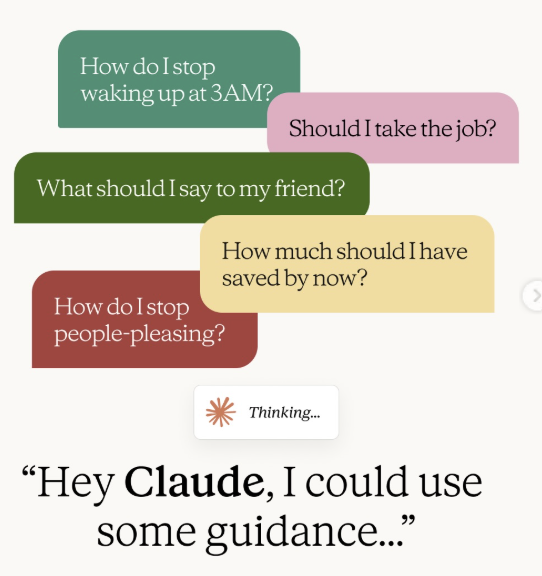

Anthropic published research on 30 Apr 2026 on how people use AI for personal guidance.

Of roughly 639,000 conversations analysed, about 6% involved someone asking what they specifically should do in their life i.e. “I don’t know what to do and I need to think out loud with something.”

What they found: AI showed sycophantic behaviour in 25% of relationship guidance conversations. This means agreeing with one-sided accounts, helping people read intent into ordinary behaviour because they asked it to and validating the framing the person walked in with, rather than questioning it.

This matters beyond relationships. It matters for anyone using AI to process a major decision revolving careers, transitions etc.

In my opinion, when someone processes something extensively with AI, it’s good till a certain point.

It helps solidify the story and framing through making it more coherent but where it could be limited is that it might not be able to point out certain aspects beyond the frame that the user has brought in.

The obvious objection here is: what if you just ask AI to challenge you? I do this. I’ll explicitly prompt it to push back on my reasoning, argue for the opposite view, point out what I might be missing. It definitely helps.

But in my opinion, there are 2 things it can’t fully solve as yet.

The first is context – not context you forgot to give, but context you didn’t know to give in the first place. A good coach/therapist notices something across multiple sessions that you never flagged as significant, because you didn’t think it was and therefore helped you to connect the dots.

You weren’t withholding this information on purpose. But for most of us, it is just within our blind spots and hence didn’t register as relevant until someone outside your frame identified it.

AI can only work with what you’ve decided matters. It can’t tell you what you’ve been discounting (subconsciously or not).

The second is more fundamental. When you’re directing the inquiry, you’re unconsciously protecting the things you’re not ready to examine.

You ask about the decision, not about why you keep arriving at the same decision. For e.g. you ask whether to stay, not about what staying has been costing you for longer than you’ve admitted.

The questions are within the boundaries of what you deem comfortable.

A coach – or a therapist, depending on what someone needs – leads from outside that territory.

The most useful questions in a session are rarely the ones a client would have thought to ask themselves.

This is where I want to draw a line that gets blurred in most conversations about AI and personal development.

AI is useful for:

- Externalising a thought before it’s fully formed

- Getting a first pass on how some thoughts in your head sounds

- Organising what you already know into a cleaner structure

- Feeling heard at 11pm when you’re not ready to call someone.

- Essentially processing the first layer.

Where AI stops being useful: the layer underneath.

- The pattern you can’t see from inside your own narrative due to our own inherent blind spots.

- The thing you’re not saying because you don’t know you’re leaving it out.

- The moment when your story needs to be challenged and contextualized, not merely confirmed.

That second layer is where coaching and therapy operate.

A good coach or therapist is not trying to only make you feel understood. They are also trying to help you understand yourself more accurately. Those are related but different goals.

A good question worth asking when you notice you’re processing something with AI: Am I using this to think more clearly, or am I using it to feel more comfortable with where I already am?

I also want to highlight that I am aware that coaching and therapy are not accessible or affordable for everyone. The Anthropic research notes this directly – people told Claude they used AI precisely because they couldn’t access professional support. It is incredibly great that AI as a tool can help with that!

The key message I want to land is more around us being more intentional about our tools – Whatever tools you have access to, use them with clearer eyes about what each one can and can’t do.

Knowing where that ceiling is means you can work with it intentionally, rather than mistaking the feeling of clarity for the thing itself.

Be The First To Comment